Exploring Security Data Lakes

Data lake security is the practice of ensuring that users only have access to the data they need – only specific files, or specific data within a file – as defined by the company’s security and access policies. These policies may be influenced both by the company’s internal philosophies regarding data access, as well as those required by data privacy regulations such as GDPR and CCPA/CCRA.

The goal of effective data lake security is to ensure faster and responsible access to the data to allow users to continue innovating.

Understanding Security for the Modern Enterprise Data Lake

Before going into the details of data lake security, it is important to understand the following:

- The role of data lakes in modern enterprises

- Move of enterprise data lakes to the public cloud

- Data lake security and governance

The Role of Data Lakes in Modern Enterprises

The most successful companies recognize data as an asset that can help them better serve their customers, driving business value through the use of various analytics and machine learning tools. Data lakes are built to store large volumes of data in its original format, with the assumption that the data will be processed at a later date. Working with different kinds of data, whether structured or unstructured, and running a variety of workloads gives organizations the flexibility to use their data assets more effectively.

Why Enterprise Data Lakes Are Moving to the Public Cloud

More and more enterprises are moving from on-premise data centers into the cloud. This is due to two main reasons:

- It is simply more economical to use cloud vendors such as Amazon Web Services (AWS) and Microsoft Azure, compared to hosting data on-premise.

- Cloud services provide the flexibility of separating storage and compute, thereby allowing businesses to use several flavors of both open source and proprietary data processing, analytics, and machine learning frameworks.

The Challenges of Security and Governance for Data Lakes

The diversity of data lake tools and abstractions that are made possible by utilizing a data lake can also create operational problems. The major area of friction is security and governance, which can threaten the data lake’s long-term viability. Data lake security (access control) and governance (auditing and visibility) are inherently lacking in traditional data lake architecture. Thus, they are typically overlooked in the initial stage of a data lake deployment.

In the early days, organizations typically work around these deficiencies by creating copies of the data for different users and use cases depending on the entitlements. Even that approach comes with significant gaps in the functionality. Specifically, the lack of fine-grained access control across multiple compute tools and different kinds of data assets, with detailed visibility into user activity.

This problem is only exacerbated over time with the addition of more data, tools, users, and requirements from data privacy regulations (GDPR CCPA, etc), which drives up inefficiencies, costs, and risk. In addition, by slowing analyst productivity and increasing time-to-value, organizations ultimately cannot accomplish the main goal of investing in the data lake, which is business agility.

Creating a Data Lake Security Plan

A data lake security plan needs to address the following five important challenges:

- Data access control

- Data protection

- Data lake usage audit

- Data leak prevention

- Data governance and compliance

1. Data Access Control

In a data lake, access control is more challenging because data is stored using the object storage model. Each file object can contain a large amount of data with many different properties. The data typically is unmanaged and available to anyone across the enterprise. A data lake may consist of millions of file objects.

In a data lake, unlike with traditional relational databases, datasets are not segmented by clear boundaries. Someone with access to a particular file object can modify it, and there is no trail or history of what was modified – only a marker that the file was altered in some unspecified way.

The standard approach calls for using built-in Identity and Access Management (IAM) controls from the cloud vendor, but those are very restrictive and challenging to implement. The best approach is to restrict file object access, allowing entry only through higher level tools such as Okera, which can enforce granular permissions and authorizations.

2. Data Lake Protection

Encryption of data at rest is a requirement of most information security standards. These are traditionally implemented through third-party database encryption products from vendors such as Enveil or Vormetric. However, for enterprises using Amazon S3, Azure ADLS, or any of the other cloud data lake vendors, encryption at rest is offered as a bundled free service.

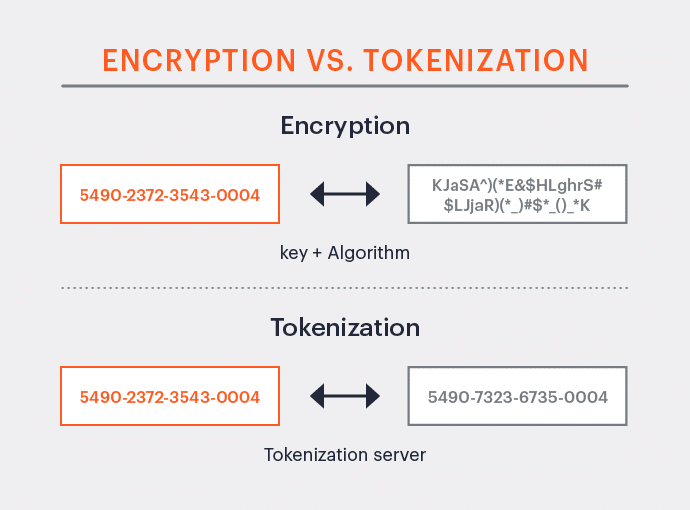

For data lake security, though encryption is desired and often required, it is not a complete solution, especially for analytics and machine learning applications. With encryption, there are two challenges.

- The format of the data field is changed, as in the figure below, which may cause many applications to break.

- Encryption is only as secure as the key to encrypt and decrypt, causing a single point of failure.

Unlike encryption, tokenization keeps the format intact, so even if a hacker gets the key, they still do not have access to the data, as shown below.

Best practices would be to use the built-in encryption from the cloud provider and then add additional security from a third party. This vendor should decrypt the data, tokenize it, and provide custom views depending on the user’s access rights – all done dynamically at run time.

3. Data Lake Usage

Data lakes often contain data from many sources in the enterprise, including data that may have been purchased from outside the company. Each dataset is used by many end users, but the two key stakeholders are the data owner and the data steward. It is critical for data owners and stewards to maintain open communication and follow enterprise data governance rules before making data available to other users within the organization.

Data lake owners are always struggling to justify their investment into the data lake, and need information such as:

- Which users are accessing the data lake and how much data are they using?

- Which applications/tools are being used in the data lake?

- How much sensitive data is in the lake?

- Which of the users are accessing sensitive data and how much?

Tools like AWS Macie and Okera Spotlight can give visibility into the adoption and usage of the lake.

4. Data Leak Prevention

Most major data leaks come from within the organization – sometimes inadvertently and sometimes intentionally. Fine-grained access control is critical to preventing data leaks. This means limiting access at the row, column, and even cell level, with anonymization to obfuscate data correctly. For example, if a marketing analyst wants to access a particular file which contains Social Security numbers, then the sensitive information should be dynamically obfuscated, rather than denying access to the entire file.

But creating the policy is not enough. There must also be consistent auditing of data usage to verify that policies are indeed working as designed, and to record any violations that may occur. The audit logs can then be connected to SIEM tools such as Splunk in order to generate alerts on any suspicious data access.

5. Data Governance, Privacy and Compliance

Every enterprise must deal with its users’ data responsibly to avoid the reputation damage of a major data breach. In addition, many industries have regulatory requirements around the handling of data, such as PCI DSS for financial services and HIPAA for healthcare. However, data governance compliance is not solely limited to regulated industries.

In 2018, GDPR went into effect in the EU, with California following in its footsteps as 2020 began with the CCPA. (This webinar explains differences between GDPR and CCPA, along with how to meet each set of requirements.) Many US states and over 100 countries worldwide are working on their own data privacy regulations with stringent requirements for data collection and erasure.

Data lakes introduce new challenges to your data platform and governance teams. The data platform team is responsible for implementing the appropriate technology solution(s) to meet existing and newly emerging standards. Meanwhile, the data governance team needs ongoing awareness training and education in order to stay current with new regulations and develop their organization’s response.

Ensuring Security for Enterprise Data Lakes

A data lake security plan needs to address five important challenges:

- Data access control – enhancement of the built-in IAM controls to offer fine-grained access control

- Data protection – go beyond encryption for better protection

- Data lake usage audit – ensure data security by understanding what data is in the lake, who is using it, and how much they’ve used

- Data leak prevention – can come from inside or outside the organization

- Data governance and compliance – the system must be designed to quickly enable compliance with industry and data privacy regulations

The goal of data lake security systems should be to enable agile and responsible access to the data. With GDPR fines coming in at as high as $230 million for mishandling of customers’ data, data lake security should be top of mind. This means staying on top of data access controls, following established standards, and creating a homogenous policy that is easy to disseminate across the organization.